Why ToMusic Reshapes The Earliest Stage Of Songmaking

The earliest barrier in music creation is rarely the absence of feeling. More often, it is the absence of translation. People know the mood they want, the pace they imagine, or the emotional role a song should play, yet they still cannot hear it. A lyric stays trapped on the page. A creative brief remains only a paragraph in a document. A scene has visual momentum but no sound that truly belongs to it. That is why an AI Music Generator can matter in a practical sense. It does not remove the need for taste, and it does not turn every prompt into something unforgettable. What it does, at least in tools like ToMusic, is reduce the distance between intention and audible draft, which is often the point where many ideas either begin to move or quietly disappear.

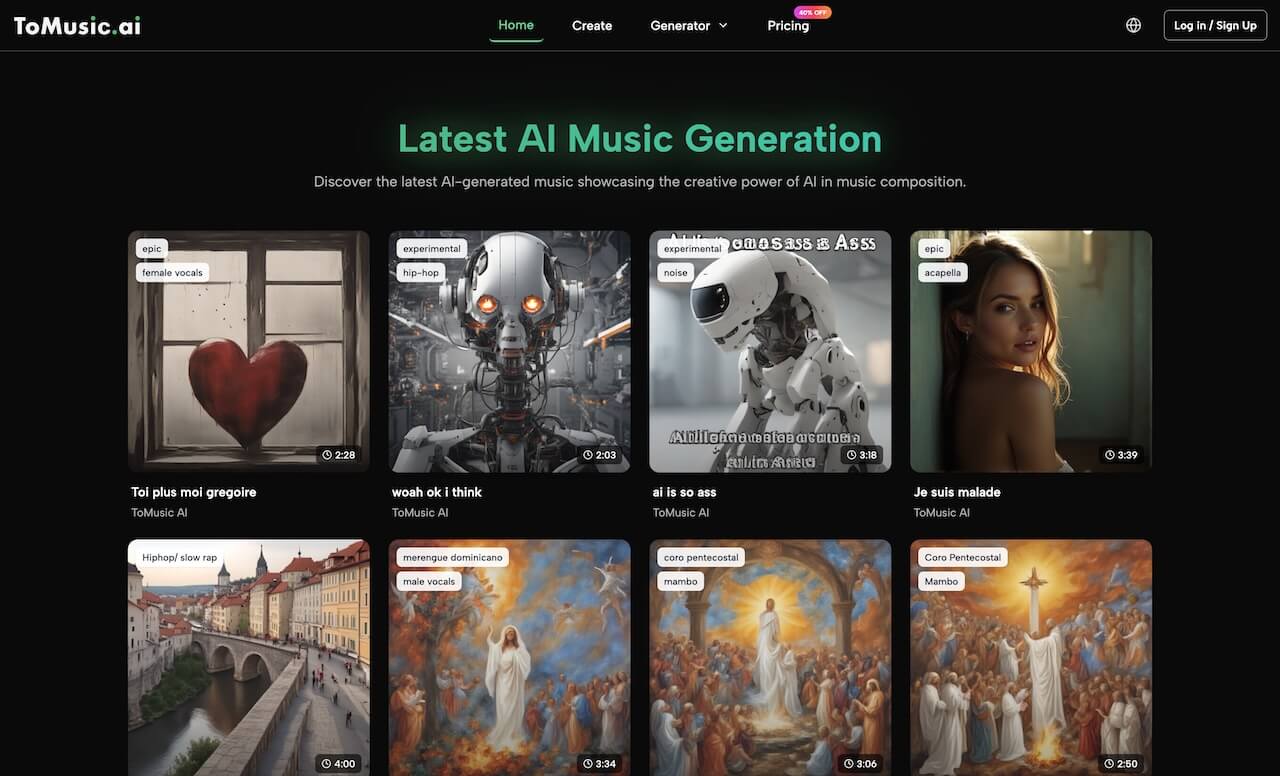

What makes ToMusic worth examining is not only that it can generate songs from text or lyrics, but that it presents music creation as a structured workflow rather than a novelty action. The platform combines prompt-based generation, lyric-based generation, multiple model versions, a library that stores results, and export options that make the output easier to reuse. In my reading of the product, this is the more important story. The platform is not only about creating music faster. It is about changing when music can enter the creative process. Instead of arriving at the end, after many other decisions have already hardened, it can arrive early enough to shape those decisions while they are still flexible.

Why Early Musical Direction Often Goes Missing

A surprising amount of creative work stays incomplete because sound enters too late. A video may already have pacing, color, and narrative structure, but still feel unfinished. A songwriter may already have words and a rough emotional center, but no way to test how those words should live inside melody. A small team may know exactly what tone a campaign should communicate, yet still rely on temporary stock music because original sound feels too slow or too expensive to explore at the first-draft stage.

This delay matters because music is not just an accessory. It influences timing, emotional intensity, and memory. The same visual sequence can feel distant, warm, cinematic, restrained, playful, or reflective depending on the soundtrack beneath it. In that sense, waiting too long to make musical decisions often means waiting too long to understand the full project.

Why Abstract Ideas Need Audible Form Quickly

In my experience, a creative idea becomes easier to evaluate the moment it can be heard. Before that point, people tend to project possibilities onto silence. They imagine a song might work. They imagine a lyric might feel intimate or dramatic. They imagine a trailer might become more persuasive once the right score appears. But imagination alone is unstable. Once a first version exists, even an imperfect one, the discussion becomes sharper.

A creator can ask more useful questions. Is the energy too high. Does the vocal delivery feel too polished for the message. Is the structure helping the lyric or flattening it. This is the practical value of early generation. It does not need to solve everything. It only needs to produce something real enough to think against.

Why Traditional Workflows Often Exclude Strong Ideas

Older music workflows tend to reward people who can already translate taste into technical decisions. They assume familiarity with arrangement, software, instrumentation, and editing. That works well for trained musicians and producers, but many people who need music today begin from a different place. They begin from language, story, or mood.

That is part of what makes ToMusic’s design notable. It assumes the user may not start with a DAW mindset. Instead, the platform invites them to start with prompts, lyrics, and visible categories such as style, genre, mood, voice, and tempo. That shift makes the entrance to music creation much wider.

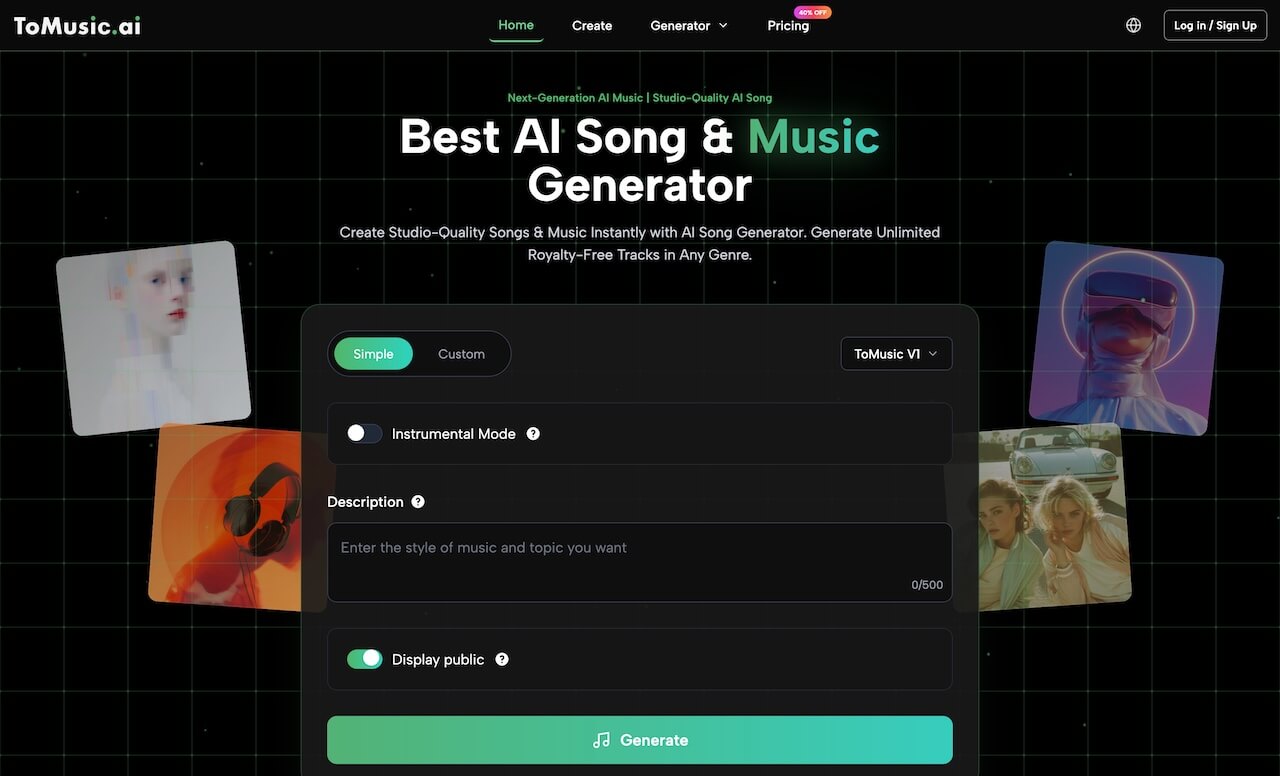

How ToMusic Turns Language Into Song Direction

At the center of the platform is a simple but significant idea: words can function as a practical control surface for music. The user does not begin by building notes manually. The user begins by describing the result.

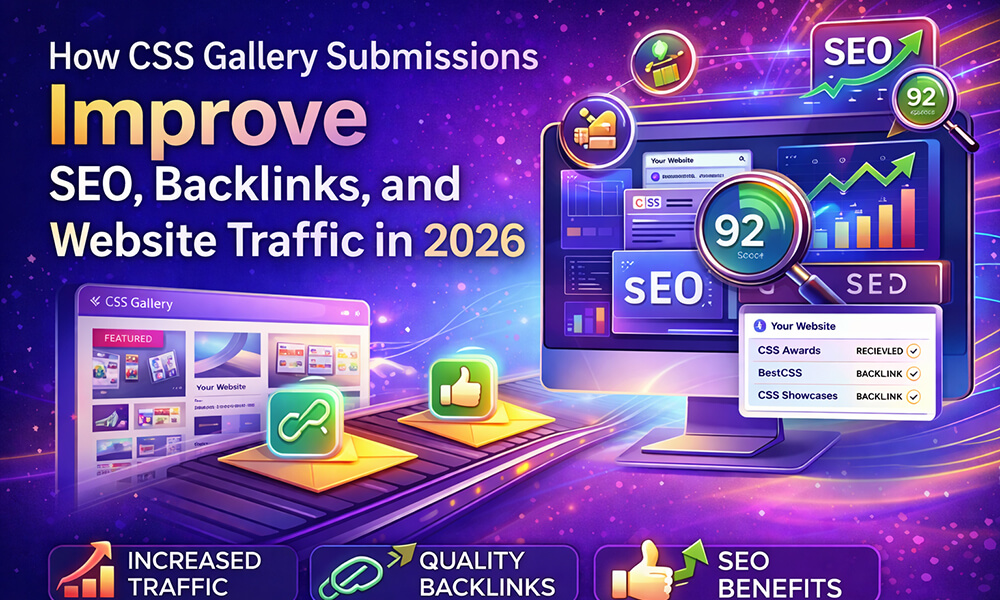

How Prompt Input Functions As Creative Briefing

The generator interface shows fields for title, styles, genre, moods, voices, tempos, and lyrics. That structure suggests the system is built to interpret creative intent in layers. A prompt can communicate emotional texture, musical shape, and end use all at once. Someone can ask for something softer, darker, slower, fuller, more intimate, or more rhythmic without needing to convert those instincts into production vocabulary first.

This matters because natural language is often how people already think about sound. They do not necessarily know the correct technical term, but they know what a scene should feel like. In my view, that is where the platform becomes useful. It reduces the friction between what the user can describe and what the system can render into a first version.

Why Lyrics Create A Different Kind Of Input

Lyric-based generation is especially important because lyrics are not just content. They already contain pacing, emphasis, repetition, and emotional architecture. A lyric often carries the skeleton of a song before melody has been chosen. ToMusic’s lyric workflow means the user can begin from that written structure and ask the platform to interpret it musically rather than starting from a blank instrumental idea.

That changes the creative dynamic. The task is no longer simply “make a song.” It becomes “reveal what kind of song these words want to become.” For writers and non-technical creators, that is a meaningful difference.

Why Multiple Models Add Useful Creative Range

The platform’s official pages present four model versions, V1 through V4, rather than one single system. The pricing page positions V1 and V2 as standard quality, V3 as stronger in harmonies and rhythms, and V4 as the flagship model with the best vocals. That model spread suggests the product is not trying to flatten every musical task into one default answer.

For users, that means model choice becomes part of the creative workflow. A quick concept draft may not require the same qualities as a vocal-led piece. A more rhythm-heavy idea may benefit from a different model than a lyric-centered one. In my observation, this makes the platform feel less like a vending machine and more like a toolkit with internal trade-offs.

Why ToMusic Feels More Like A Workspace

A lot of AI music tools focus heavily on the moment of generation. That makes sense because it is the most visually impressive part. But repeated use depends on what happens after the song is created. This is where ToMusic starts to feel less like a single-function generator and more like a working environment.

How The Music Library Supports Repeated Use

The Music Library page describes the library as a personal hub that automatically saves every generated track. It also says those tracks are stored with titles, tags, descriptions, lyrics, and generation parameters. I think this matters more than it first appears. The moment users generate more than a few songs, retrieval becomes part of creativity.

A promising draft may not fit the current project but may fit a future one. A near-miss can still teach the user which prompt structure worked better. A track that felt secondary on one day may suddenly become exactly right later. Without organized storage, those connections are easy to lose.

Why Metadata Changes The Learning Curve

Stored metadata also makes iteration more intelligent. Users are not only listening to results. They are seeing the conditions that produced them. Over time, that creates a practical education in prompting. People can notice that certain wording leads to fuller arrangements, or that certain lyric structures produce more coherent phrasing.

This does not mean the platform teaches music theory in a formal sense. It teaches something different and arguably more immediate: how descriptive choices affect musical outcomes. That is a useful kind of literacy for modern creators.

How Export Options Extend The Workflow

The pricing page also highlights WAV and MP3 downloads, along with stem extraction, vocal removal, commercial licensing, private generation, and storage benefits in higher plans. These details matter because they suggest the generated song is not meant to stay trapped inside the platform. It is meant to move into editing workflows, content pipelines, or further experimentation.

In other words, the output is treated as usable material, not merely an on-site demo. That makes the platform more relevant to people creating real projects rather than just testing the novelty of generation.

What The Real Workflow Looks Like On The Site

The visible process remains relatively short, which is one reason the platform feels approachable. The hidden complexity sits inside the generation system, while the user-facing steps stay compact.

Step One Starts With Prompt Or Lyrics

The first step is entering a descriptive prompt or custom lyrics. This defines the musical direction and gives the system enough information to shape the song around mood, style, and wording.

Step Two Selects Models And Visible Settings

The next step is choosing the model and the available controls shown on the generator page, such as style-related guidance and other prompt-shaping inputs. This is where the user influences not only what the song is about, but how the platform should interpret it.

Step Three Generates And Reviews The Song

Once the setup is ready, the platform generates a full song. At this point, the user can evaluate more than surface quality. They can ask whether the result fits the intended role, whether the pace feels right, and whether the arrangement supports the original idea.

Step Four Saves Or Exports The Result

After generation, the track is stored in the library and can be downloaded through the available export options. This closes the loop between creation, storage, and reuse without requiring a complicated post-processing path.

How ToMusic Differs From Simpler Generators

It is easy to assume that most music generators are roughly the same because many of them use similar language. The practical differences become clearer when you compare the workflow rather than the slogans.

|

Dimension |

Simpler Generator |

ToMusic Approach |

|

Main creative entry |

Usually one short prompt |

Prompt plus custom lyrics |

|

Model structure |

Often one default engine |

Four models with different strengths |

|

Song scope |

Quick trial outputs |

Full-song oriented generation |

|

Project continuity |

Limited history |

Library with stored metadata |

|

Output handling |

Basic download focus |

WAV, MP3, stems, vocal tools |

|

Typical use pattern |

Casual experiments |

Ongoing drafting and reuse |

The value of this comparison is not to claim that one category is useless. Simpler tools can still be enjoyable and sometimes effective. The difference is that ToMusic appears more oriented toward repeat use and iterative decision-making, which is a separate and more demanding kind of usefulness.

Where This Platform Fits Best In Practice

The platform becomes easier to understand when placed inside real creative situations rather than broad marketing claims.

For Writers Testing Whether Words Want Music

Some writing should remain on the page. Some writing becomes clearer only after it is sung. A lyric-driven workflow gives writers a way to test that boundary without needing full production skills from the start.

For Editors Who Need Sound Earlier

Video editors and short-form creators often discover that pacing problems are actually soundtrack problems. A generated full song can help them test rhythm and emotional flow much earlier in the edit process.

For Small Teams Prototyping Tone

Not every team needs a polished final song on day one. Often what they need is a credible emotional prototype that helps them decide whether a project should lean warmer, bigger, calmer, or more direct. That is exactly the sort of work a language-led music platform can support.

Why This Helps People Who Work Alone

Solo creators often carry too many roles at once. They write, edit, package, publish, and revise their own work. Any tool that reduces friction in one category has an outsized effect. In that context, being able to move from a sentence or lyric to a complete audio draft is not just convenient. It protects momentum.

What The Limits Still Are

A balanced view makes the tool easier to trust.

Prompt Quality Still Shapes Output

A vague request often produces a vague song. The platform can do a lot, but it still depends on the clarity of the input. Better prompts generally lead to stronger results.

One Pass Is Rarely The Final Answer

In my testing of tools in this category, the first generation is often diagnostic rather than definitive. It shows direction, but refinement still matters. Users may need to adjust phrasing, change models, or rethink the intended role of the track.

Taste Still Matters More Than Automation

Generation can accelerate the first draft, but it cannot fully replace judgment. Someone still has to decide what feels convincing, what serves the project, and what deserves to move forward.

Why The Shift Still Feels Important

Even with those limits, ToMusic points to a meaningful change in creative software. It treats language as a serious entry point to music creation, supports multiple kinds of interpretation through its model lineup, and preserves results in a way that encourages reuse rather than disposal. That does not guarantee perfect songs. What it does offer is something more fundamental: a way for more ideas to become audible early enough to shape the work while it is still alive and flexible.